Is AI really destroying the environment?

Students and faculty think so—are they right?

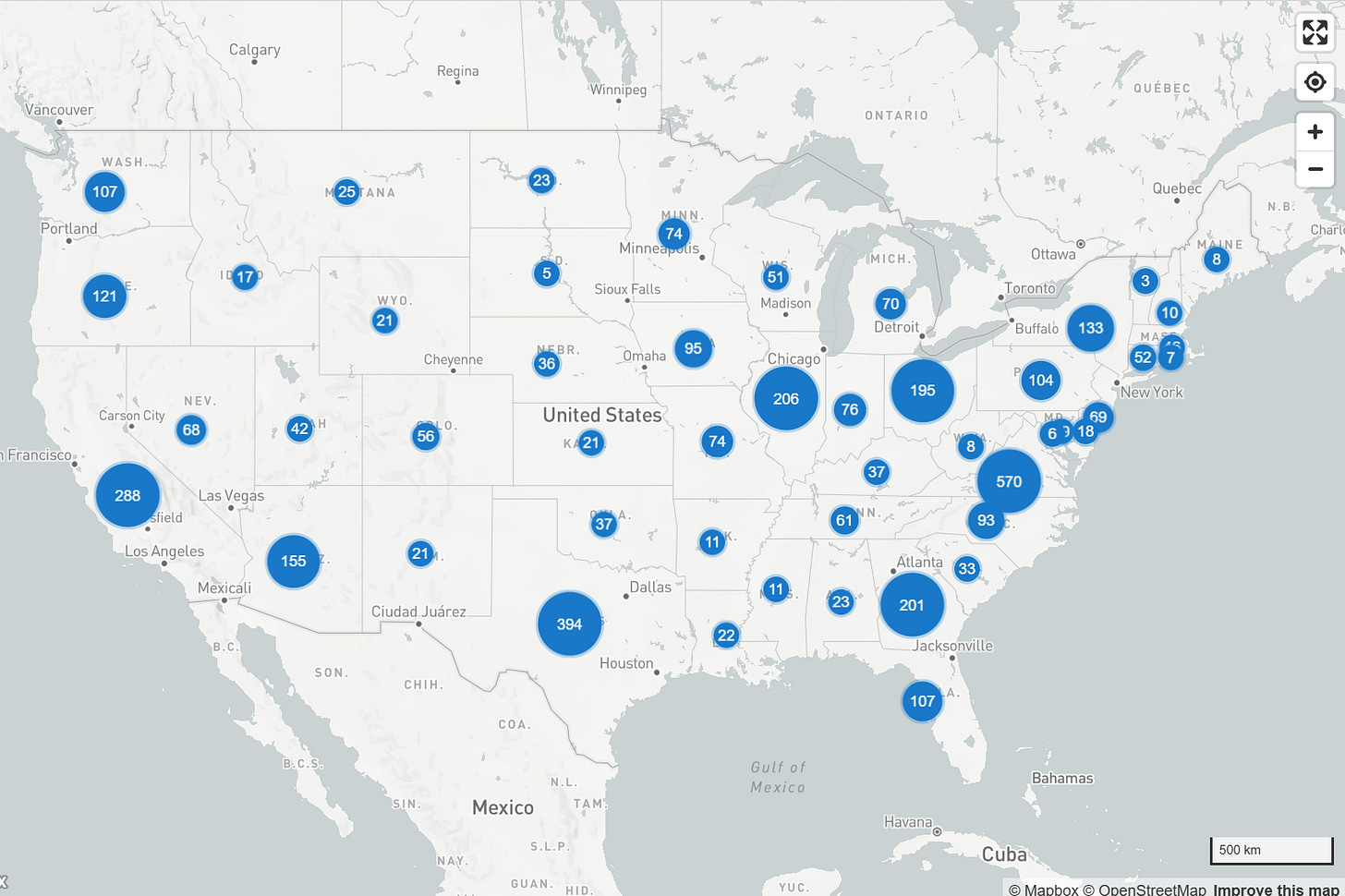

There are more data centers in the US—nearly 4,000— than there are Chipotile restaurants, according to estimates from Data Center Map, who’s been tracking US data centers since 2007. These centers have become a flashpoint in public discourse about AI, prompting bipartisan backlash and growing concerns from higher ed faculty.

But instructors aren’t the only ones questioning the environmental ethics of AI growth. In an online forum on teaching with AI hosted by Bryan Alexander last month, an instructor shared a message in chat about her students’ disposition toward the tools:

“Yesterday meeting a class for first time, there were several students completely opposed to AI…have noticed a shift in this direction. Environment was one reason.”

This ethics-driven resistance is not new—higher ed stakeholders have cited [pending] environmental devastation as one of many AI dealbreakers for years now. But discussions in recent months have become increasingly nuanced, with conflicting messages from experts about the size of gen-AI’s carbon footprint.

For example, on that same online forum mentioned earlier, AI thought leader Jose Antonio Bowen remarked that the water and electricity used to power streaming services for Netflix alone is “10 to 20 times more” than the energy used to power generative AI software, which is loosely supported by usage estimations from the Jisc, a UK-based nonprofit.

Other experts are looking beyond individual use patterns, drawing attention to initiatives like Stargate, a joint effort by the Trump administration and OpenAI to develop 10 massive data centers dedicated to AI, each of which would consume as much power as the state of New Hampshire.

I usually venture down these rabbit holes privately, but I chose to make my informal investigation public for two reasons. First, it’s clear that faculty and students’ perceptions of AI’s environmental consequences are influencing how they teach and learn, respectively; I feel it’s imperative to ensure that our perceptions are evidence-based. Secondly, I’m hoping this investigation mirrors Stanford University’s framework for ethical AI literacy—the ability to critically analyze the academic, social, and environmental consequences of AI tools and adopt practices that promote ethical behavior. When our colleagues and students raise or dismiss AI’s carbon footprint as an ethical issue, I want to meet those concerns with care and accuracy.

So, how much does generative AI actually impact the environment, and in which ways? And how does this information inform our teaching moving forward? If you want a quick answer, skip to the “How should we approach environmental ethics surrounding AI in our teaching?” section at the end. But if you want a comprehensive deep dive on the environmental ethics of AI, buckle up and enjoy the ride.

Data centers have existed for decades. Why should we be concerned about them now?

Well…they’re growing rapidly and use water and electricity. Whether it’s “a lot” or “too much” is up for debate.

Salesforce. Google Drive. Amazon Prime. Twitch. These are among the thousands of popular apps and services that are powered by data centers. Data centers also power AI tools like ChatGPT and Claude. When people caution the environmental impacts of data centers, they’re mostly talking about the water and electricity consumed by hyperscale data centers, the warehouse-sized facilities found in rural parts of the country. (There are smaller, often private data centers that don’t consume nearly as much energy as the hyperscale centers making national headlines.) Though hyperscale data centers existed pre-2022, we’re seeing an influx of centers built for the express purpose of powering genAI.

Electricity is used to train Large Language Models (LLMs) and power the CPUs used to maintain them—imagine thousands of refrigerators packed into a warehouse side-by-side in a dark room, but instead of keeping your food cold, they’re all computing millions of math equations every second. Water is used to prevent the CPUs (fancy fridges) from overheating. Those three components—LLM training, computational capacity, and CPU cooling—are the three main ways data centers use energy.

With more data centers comes more energy usage. But just how much water and energy do these facilities use, and does it warrant AI scrutiny? Let’s look at this from both a micro and macro perspective.

Does Netflix use more energy than ChatGPT? - The Micro Picture.

Entering a single prompt into ChatGPT requires about 0.0029 kilowatt hours (kWh) of energy. To put this in context, streaming Netflix in HD for one hour uses around 0.077 kWh. You’d have to prompt ChatGPT 26 times to match the energy consumed by watching one hour of Netflix. This is likely where Jose Antonio Bowen got his ‘Netflix uses 10 to 20 times more energy’ claim from.

This comparison demonstrates that individual AI prompting isn’t as environmentally costly as some would think. However, the math is different if, for example, you stream less than an hour a day, or are asking AI to do complex tasks like generate videos, make a dinner reservation on your behalf, or do your homework for you. The toll of our usage depends on how and how often we use genAI, and there are several online calculators—like climatealigned.co’s—that estimate the impact of certain tasks.

In summary, the ‘average person’ probably consumes more energy while streaming their favorite show than when prompting genAI. This is variable and depends on use patterns. The macro picture, however, is more revealing.

Environmental racism is coming to a city near you. - The Macro Picture

According to O’Donnell and Crownhart’s analysis of 2024 report, 4.4% of all energy in the US is consumed by data centers. And since not all data centers exclusively power AI tools, we can assume that AI-specific consumption is even less. O’Donnell and Crownhart also cited a Department of Energy report, which projects that by 2028, more than half of the energy consumed by data centers will be used for AI. This suggests that more data centers will dedicate their resources to supporting the US’s AI infrastructure.

The remaining 95.6% is made up of energy consumed by other sectors, like transportation, manufacturing, and US homes and residential buildings. Energy consumption isn’t the only measure to examine. Let’s take a closer look at the precipitous effect of the 4.4% on the environment.

Environmental Impacts

Pollution

Building a single hyperscale data center can take 12 to 36 months. That’s 1 to 3 years of noise pollution, and many years after of light and air pollution, the latter of which will be caused by the fossil fuels; the Trump administration is incentivizing tech companies to power their facilities with coal, for example.

Ecosystem Disruption

As data centers expand and compete for real estate, many are being built in rural environments, leading to deforestation, which destroys habitats and disrupts ecosystems.

Environmental Racism

Many have cited the history of environmental racism in the US: people of color who’ve been redlined into cities and neighborhoods that are undesirable at best and deadly at worst. We see this with data centers, as Hampton and Nost (2025) found that “communities within one mile of data centers not only tend to be disproportionately communities of color—relative to the national median—but also face particulate matter, nitrogen dioxide, and diesel particulate matter levels above the national median.” Data centers join nuclear power plants, food and vegetation deserts, toxic waste sites, CO₂-emitting factories, and polluted bodies of water on the list of conditions imposed on Black and brown people.

Financial Burden

Energy prices are skyrocketing in states across the country, with electrical grids working overtime to meet the energy demand of data centers. Energy companies aren’t eating those costs—they’re passing them on to consumers, and low-income families are feeling it most.

AI as a Problem and a Solution?

Bennett and Rose (2025) acknowledge that AI can be both a problem and a solution. In their words, “AI can also help mitigate climate change by optimizing renewable-energy grids, monitoring deforestation, and modeling atmospheric systems (Bartczak and Block2025). The key is balancing technological innovation with environmental stewardship.”

How should we approach environmental ethics surrounding AI in our teaching?

How to think

I’m most interested in how these revelations inform the ways we talk about AI ethics with colleagues and students. O’Donnell and Crownhart remind us to not get caught up in chastising individual behavior:

“Tallies of AI’s energy use often short-circuit the conversation—either by scolding individual behavior, or by triggering comparisons to bigger climate offenders. Both reactions dodge the point: AI is unavoidable, and even if a single query is low-impact, governments and companies are now shaping a much larger energy future around AI’s needs.” - O’Donnell and Crownhart, 2025

Instead of saying things like, “Well [insert unethical behavior] is worse!”, or “You’ll get left behind if you don’t use AI!” (fearmonger by shoving late stage capitalism in people’s faces), I plan to point to macro-level impacts on the environment: deforestation, pollution, environmental racism, and the lack of federal regulation to mitigate these harms.

I’m the product of a liberal arts education, one that prided itself on teaching learners not what to think, but how to think. I try to embody this in my teaching, faculty development, and writing. The truth is that all of us benefit and suffer from capitalism. Instead of telling others how they should benefit and suffer, I try to expose our privileges and burdens and challenge others to reckon with these truths when faced with ethical dilemmas.

How we navigate these dilemmas is influenced by our values. That’s why dialogue about AI ethics is so important—it helps conversants recognize, reframe, and act in accordance with their values in striving toward ethical decision-making.

I used genAI—mostly Perplexity—to conduct research for this article. I recognize the toll that my AI use has on the environment. I’m also using these tools to reduce several weeks worth of gathering sources, reading, and writing to about 15 hours of work. This allows me to bring high-quality content to you for free on a regular basis, on ways to dismantle oppressive systems within and encroaching upon higher education. I’m at peace with my ethical orientation to AI use, and I’m open to changing my approach if presented with new insights. I also don’t judge anyone who refuses to use AI for ethical reasons, or uses tools in ways that exceed my personal boundaries. I urge my colleagues to lead with this same level of lucidness.

How to teach

My views on teaching remain the same: Instructors should always invite students to co-create AI expectations in their courses, even though instructors should have the final say. We can do this by co-creating our AI policies, or facilitating discussion around an existing policy. There are effective examples of no-tech, low-tech, and AI-empowered classrooms. Teaching effectiveness, in large part, is decided by how those expectations are determined, communicated, and incentivized. I teach an education technology course, and I sparingly ask students to use AI, typically at the very beginning of the course during synchronous class time. This allows every student to use it responsibly if they find it helpful in their learning, while also empowering their ethical decision-making.

What to say

The next time someone raises (or dismisses) environmental concerns in an AI conversation, I plan to tell them something like this:

The impact that data centers have is relatively small compared to the energy consumed by homes, transportation, and manufacturing. But the amount of energy used by data centers is rapidly growing, and that energy is getting dirtier. I want to see data centers use clean energy to mitigate the environmental costs, and I want to see people of color have the same agency over their living conditions as their white counterparts. Real people are being negaitvely affected by AI growth, and the situation is worsening for those people.

If you liked this piece, you’ll also enjoy: