The Case Against TurnItIn

TurnItIn profits from students’ unpaid labor—without their consent.

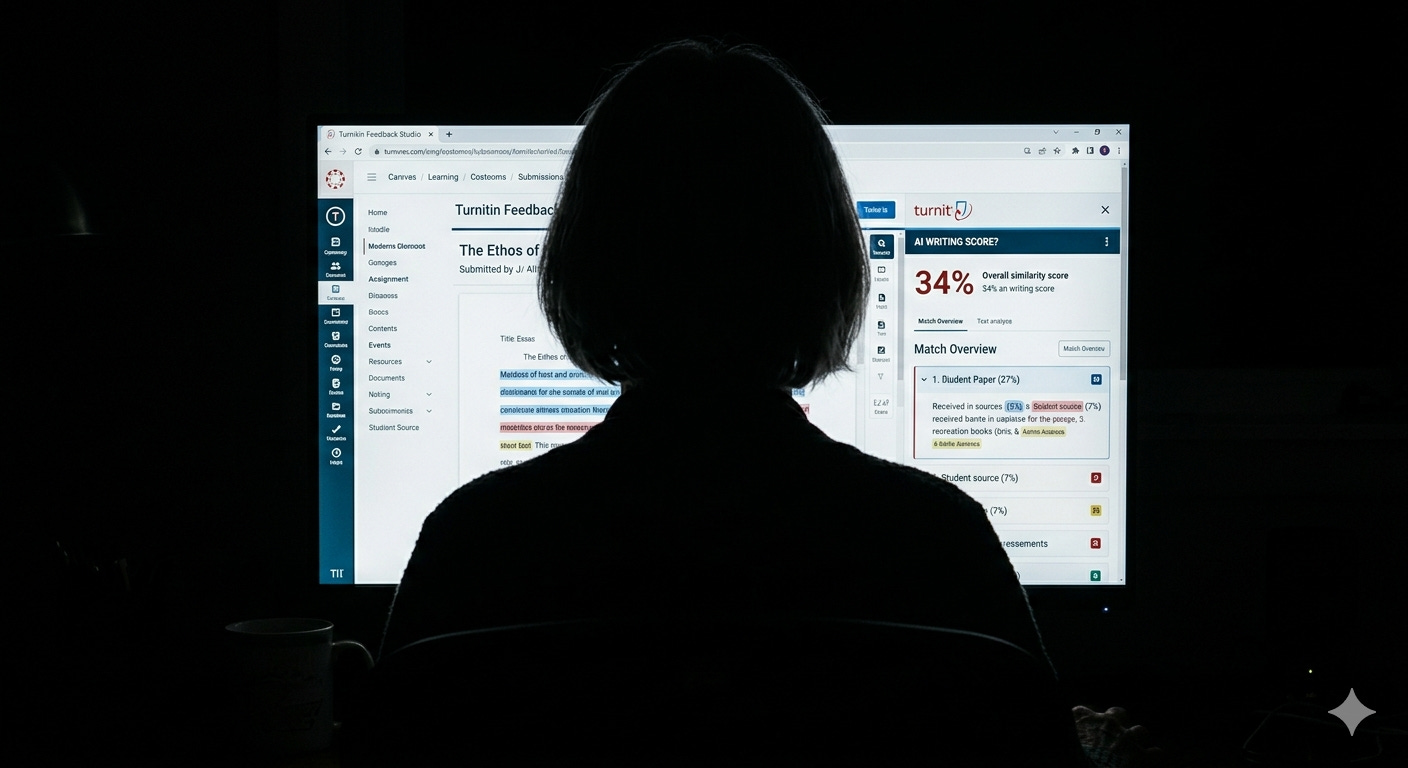

In a recent piece titled, Is TurnItIn Accurate?, I focused on one of the many reasons to be skeptical of TurnItIn’s AI writing detection feature.

My criticism of the tool’s accuracy has so many layers—the company is citing two-and-a-half-year-old studies to defend its claims; anecdotal evidence points to an increase in false positives on student writing, especially in STEM courses; it fails to detect nuanced hybrid use cases—that it deserved its own post. The article you’re reading now addresses all of the other reasons to be wary of AI detection software.

“Some platforms are not agnostic. Not all tools can be hacked to good use. Critical digital pedagogy demands we approach our tools and technologies always with one eyebrow raised.” - Morris and Stommel, A Guide for Resisting Edtech: the Case against Turnitin.

TurnItIn profits from students’ unpaid labor—without their consent.

TurnItIn is a for-profit company, among the likes of Instructure, Microsoft, Panopto, and other ed tech service providers. What you may be surprised to learn, however, is that TurnItIn is owned by Advance Publications, a billion-dollar media giant whose subsidiaries include household brands such as Vogue, Reddit, and Warner Bros. Discovery.

Behind TurnItIn is a team of skilled sales professionals tasked with selling a suite of products designed to “Guide responsible AI use, identify AI-generated writing, and deliver secure digital assessments with solutions designed with and for the global academic community.”

The truth is that many students and instructors in the ‘global academic community’ don’t know how AI detection software works—what writing characteristics it looks for, the functionality and real-life accuracy of its algorithm, etc. Many are also unaware of how the company scans, stores, and sells student work.

Here’s a snippet from TurnItIn’s Terms of Service:

“If You [the student] submit a paper or other content in connection with the Services, You hereby grant to Turnitin (and, if necessary for providing the Services its affiliates, vendors, service providers, and licensors) a non-exclusive, royalty-free, perpetual, worldwide, irrevocable license to use such papers, as well as feedback and results, for the limited purposes of a) providing the Services, and b) for improving the quality of the Services generally.”

Although TurnItIn “shall not obtain the right to use concepts or ideas set forth in papers submitted to the site”, they can’t generate similarity reports and AI writing scores without analyzing hundreds of millions of student papers. TurnItIn isn’t just selling anti-plagiarism software; they’re selling the database that the tool runs on.

One could argue that TurnItIn’s most profitable product is built on the backs of students. Since universities agree to these terms through enterprise subscription purchases, and since this software is built into learning management systems and used at the sole discretion of instructors, there’s no way for students to opt out of having their work repurposed by a company that makes millions in revenue from their labor.

How many students would opt out of having their work analyzed by detection software if they could? A portion would do so to cheat without getting caught. Others would refuse the tool to avoid playing defense attorney against false positives, or because of environmental and privacy concerns. But when presented with this critical lens, that plagiarism detection software uses their work to power a revenue-generating tool that, in many ways, threatens their academic and personal wellbeing, I’d imagine that the number of consenting students would be close to none.

Is breaching students’ privacy and autonomy worth catching the cheaters? Universities are effectively saying yes.

Detection tools fuel confirmation bias.

When you consider how plagiarism detectors are used in online learning environments, you realize how bias seeps into even the most genuine attempts to guide students’ responsible engagement in courses.

Depending on how your LMS’s grading interface is configured, instructors can see AI writing scores without reading a single word of a paper. If the score is perceived as low, the instructor might implicitly read the paper with relief, excitement even. If the score is perceived as high, they might read with a more critical eye, searching for evidence of AI writing when they otherwise wouldn’t. This confirmation bias hurts all students, but especially those who used no AI or used AI in ways that complement, not hinder, their learning.

That aside, the following scenarios exemplify tool-driven confirmation bias:

An instructor thinks a student misused generative AI. They use the AI score as confirmation bias without seeking any information that would challenge their initial assessment.

The instructor doesn’t think the student misused AI, but a high AI writing score is making them think otherwise.

The instructor isn’t sure whether their student misused AI. The AI score tips them in one direction or the other.

In these common scenarios, the tool confirms instructors’ suspicion through evidence that is, to put it tamely, unreliable.

Additionally, a high AI score is almost always perceived as learner deficiency. High AI scores are rarely, if ever, seen as an indictment of the instructor’s teaching or course design—the unclear instructions, high-stakes grading, culturally irrelevant assignments, and didactic teaching style that drives misuse. If TurnItIn is a one-edged sword, the students are always the ones facing the blade.

Detection software stifles student learning.

Imagine this scenario: You are assigned a 24-hour ‘responsibility officer’ whose sole job is to ensure the integrity of your work. Every time you open your laptop, sit in front of your monitor, or open a work email on your phone, the officer hovers over your right shoulder, tracking all of your search queries, ideas, distractions. How would the looming presence of this officer affect your work? How would this human surveillance system impact your sense of freedom? Self-efficacy? Wellbeing?

To understand how TurnItIn impacts student learning, it’s helpful to see TurnItIn and other tools and strategies like automated proctoring software, document version history, and downloadable chatbot threads as surveillance technology. Students today are aware that they’re being watched by non-human entities as they engage with the online components of their classes. In courses with a heightened focus on AI detection, they know their document version histories, chatbot threads, and time spent in a document or LMS can be subpoenaed by their professors at any moment.

Once the assignment is submitted, they know that a non-human entity, one that cannot be reasoned with or held accountable, may accuse them of something they didn’t do. Some students, especially high-performing students at elite universities, are taking drastic self-surveillance measures to defend themselves: some set up a camera in the corner of the room, recording themselves working for hours in the event they have to fight against a cheating allegation. Others are taking screen recordings and screenshots. Some are even pasting their work into web-based AI detectors (like turnitin.app) to combat the results generated by the LMS-integrated tools.

How do you think all of this impacts students’ learning? Sure, it may deter some students from misusing generative AI, but how does it affect the students who (want to) use it responsibly and those not using it at all? The student who feels the need to record themselves working may be less compelled to walk away from the laptop for a much-needed break. A student who knows they may need to hand over their chat history may take fewer intellectual risks, or they might use ‘safe’ prompts to avoid judgment from their instructor. A student who fears a false positive from TurnItIn may dumb down their writing to game a lower AI score.

There are student-centered approaches to monitoring and supporting work processes, like requiring AI acknowledgement statements, assigning metacognitive oral reflections, facilitating peer review, and having students start projects in groups during synchronous class time. These efforts are more effective at promoting a culture of learning, integrity, transparency, and what Jesse Stommel calls “scholarly generosity” than TurnItIn.

AI literacy becomes an afterthought.

In their 2017 article, Morris and Stommel share discussion questions that can spark critical analysis of plagiarism detection tools:

Who owns the tool? What is the name of the company, the CEO? What are their politics? What does the tool say it does? What does it actually do?

What data are we required to provide in order to use the tool (login, e-mail, birthdate, etc.)? What flexibility do we have to be anonymous, or to protect our data? Where is data housed; who owns the data? What are the implications for in-class use? Will others be able to use/copy/own our work there?

How does this tool act or not act as a mediator for our pedagogies? Does the tool attempt to dictate our pedagogies? How is its design pedagogical? Or exactly not pedagogical? Does the tool offer a way that “learning can most deeply and intimately begin”?

How could instructors and students engage with these questions and reasonably determine that TurnItIn should be used in their course? I ask this earnestly; if there are examples or rationales, I’d love to hear them.

My guess is that most students aren’t asked these types of questions in courses where TurnItIn is used. I’m aware of the barriers preventing faculty from having these types of conversations with students: class time, class size, limited awareness, necessary compliance with program- or school-level AI policies, and personal views on genAI are all valid reasons. Not asking these kinds of questions, however, denies students the right to resist generative AI on their own. Not asking these questions ignores the agency that many learners will have over whether and how they use generative AI in their work and lives beyond graduation. Not asking these questions maintains the status quo: instructors telling them to use or not use.

When faculty lean on detection software, they lose the ability to articulate why AI writing alone is insufficient and the skills students must demonstrate through their composition that AI can’t. We (instructors) can’t control whether and how students use generative AI. But we can encourage them to make their outputs more human, more creative, more original, and more effective. Can an AI writing score help with this articulation? Potentially. But I’ve also seen it derail conversations about learning entirely.

More human alternatives exist.

I can admit, TurnItIn results are a simple and scalable way to describe problematic use of AI. Paired with these results are characterizations of student work as lacking “flavor” and “authentic voice.” Just because these methods are simple and scalable doesn’t mean that we should ignore more rigorous, human alternatives.

A helpful place to start is ensuring that every aspect of your course is designed for integrity. I’m talking about a robust course-level AI policy (or activity for designing one with your students), assignment-level AI guidelines, guidance on when and how to cite AI, flexible assignment deadlines, allowing assignment resubmissions, assignments that are culturally-relevant and multimodal (instead of busy work), flat grading (instead of high-stakes grading), transparency measures like AI acknowledgment statements and metacognitive reflections, and modeling effective use/refusal yourself, among other strategies.

After this self-audit, you can reflect on your grading process. Can you begin grading assignments with TurnItIn turned off? If you suspect AI writing without TurnItIn guiding your thinking, try stating your concerns as specifically as possible. What exactly about the work feels de-personalized or stiff? Does it not fit the writing style you expected? Does it fail to quote material or moments from class? Does the paper start with an introductory sentence that includes a summation of the prompt? Are the citations or direct quotes inaccurate? Is the writing overly generic, lacking specific stakeholders (and if so, what would be an example of more specific writing)? Does it not have a clear audience? Is the writing structure similar to that of specific chatbots? (For example, ChatGPT’s writing structure: “It’s not about this—it’s about that”). What does the assignment policy say about AI use? How does the work reflect or ignore such guidance?

From there, you might draft a message describing what you see and why you’re concerned. You can withhold a grade until the student resubmits since, after all, we’re asking students to demonstrate their learning. Emphasize that you’re not trying to punish them, but you do need to ensure that they themselves are doing the thinking.

Offer accessible opportunities to help students meet your expectations. Quite literally, offer to meet them where they are: the campus library, in the dining hall, or over the phone if they’re too busy or unable to do a video call. If a one-on-one conversation is necessary, give them a sense of the questions you plan to ask and the materials and evidence you want them to bring to the conversation. For minor errors, like inaccurate citations, you might deduct points that could be added back if they resubmit with revisions.

These options aren’t perfect, nor are they easy, but they are human.

Need a speaker or facilitator on inclusive teaching in the age of AI? Learn more about my faculty development work at jdspeaks.com/educators.

If you enjoyed this piece, you’ll also enjoy: